GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro: Best Frontier AI Model (March 2026)

By AI Workflows Team · March 8, 2026 · 16 min read

Comprehensive three-way comparison of GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro. Benchmarks, pricing, coding performance, agentic capabilities, and expert recommendations for March 2026.

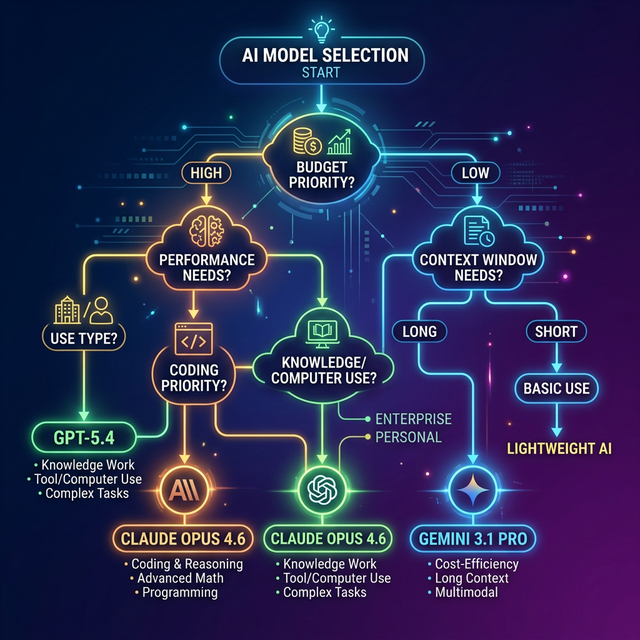

TL;DR — Quick Decision Guide

The March 2026 frontier AI landscape is a genuine three-horse race for the first time. Here's the fast version:

- GPT-5.4 ($2.50/$15 per MTok) dominates knowledge work (GDPval 83%) and computer use (OSWorld 75%, surpassing human experts). First model with native desktop control.

- Claude Opus 4.6 ($5/$25 per MTok) leads coding (SWE-Bench Verified 80.8%) and offers the deepest adaptive reasoning. The developer favorite.

- Gemini 3.1 Pro ($2/$12 per MTok) wins on abstract reasoning (GPQA Diamond 94.3%) and delivers the most value per dollar with 2M token context.

| Feature | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|

| Input/Output Price | $2.50 / $15 | $5 / $25 | $2 / $12 |

| Context Window | 1M (Codex) / 272K | 200K (1M beta) | 2M |

| Best Category | Knowledge work + Computer use | Coding + Reasoning depth | Reasoning breadth + Cost |

| GDPval | 83.0% | 78.0% | — |

| SWE-Bench Verified | — | 80.8% | 80.6% |

| GPQA Diamond | 92.8% | 91.3% | 94.3% |

| OSWorld | 75.0% | 72.7% | — |

| Release Date | March 5, 2026 | February 4, 2026 | February 19, 2026 |

Bottom line: There is no single "best" model anymore. The winner depends entirely on your primary use case. Read on for the detailed breakdown.

The March 2026 Frontier Landscape

Three companies now field frontier models that match or exceed human expert performance on specialized benchmarks — and each model represents a fundamentally different design philosophy.

OpenAI released GPT-5.4 on March 5 as its most capable model for professional knowledge work and autonomous computer control. The model integrates the programming capabilities of GPT-5.3-Codex while introducing native computer use — a first for any frontier model.

Anthropic's Claude Opus 4.6 (February 4) remains the SWE-Bench coding benchmark leader with the deepest adaptive reasoning capabilities. With a 128K maximum output and Agent Teams for multi-agent orchestration, it's the model developers reach for instinctively.

Google DeepMind's Gemini 3.1 Pro (February 19) offers the highest abstract reasoning scores at the lowest price point among the three. Its 2M token context window is 10× larger than Opus and 2× larger than GPT-5.4's Codex mode, making it the undisputed leader for long-context workloads.

Each model optimizes for entirely different workflows. OpenAI bet on applied professional productivity. Anthropic doubled down on surgical coding precision. Google pushed toward maximum reasoning breadth at aggressive pricing. Understanding these trade-offs is the key to choosing the right model.

Full Benchmark Showdown

The master comparison table below covers all reported benchmarks across knowledge work, reasoning, agentic AI, computer use, and coding. The data comes from official vendor reports and primary sources as of March 2026.

Knowledge Work & Professional Tasks

| Benchmark | GPT-5.4 | GPT-5.4 Pro | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|---|

| GDPval | 83.0% | — | 78.0% | — |

| BrowseComp | 82.7% | — | 84.0% | — |

| Spreadsheet (Junior Analyst) | 87.3% | — | — | — |

GPT-5.4's 83% GDPval score is the standout result. This benchmark tests AI against industry professionals across 44 occupations — accountants, lawyers, analysts, project managers — and GPT-5.4 meets or exceeds their aggregate performance in more than four out of five cases. No other model has reported a comparable GDPval score, making GPT-5.4 the clear leader for applied professional tasks.

The model also shows significant progress in reducing hallucinations: individual statements from GPT-5.4 are 33% less likely to be false, and complete answers contain 18% fewer errors compared to GPT-5.2.

Abstract Reasoning

| Benchmark | GPT-5.4 | GPT-5.4 Pro | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|---|

| GPQA Diamond | 92.8% | 94.3% | 91.3% | 94.3% |

| ARC-AGI-2 | 73.3% | — | 75.2% | 77.1% |

| FrontierMath | 47.6% | 50.0% | 40.7% | 36.9% |

| MMMU Pro | 81.2% | — | 85.1% | — |

Gemini 3.1 Pro pulls ahead on abstract reasoning. Its 94.3% GPQA Diamond matches GPT-5.4 Pro's score while costing 15× less ($2 input vs $30). On ARC-AGI-2, Gemini leads at 77.1% — ahead of Opus (75.2%) and GPT-5.4 (73.3%).

However, GPT-5.4 Pro dominates FrontierMath at 50.0%, and Opus 4.6 leads MMMU Pro (visual reasoning) at 85.1%. The split is clear: Gemini for pattern recognition, GPT-5.4 Pro for advanced mathematics, Opus for visual reasoning.

Coding & Software Engineering

| Benchmark | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|

| SWE-Bench Verified | — | 80.8% | 80.6% |

| SWE-Bench Pro | 57.7% | — | 54.2% |

| Terminal-Bench 2.0 | 75.1% | 65.4% | 68.5% |

Coding is where the competition gets fierce. Opus 4.6 holds the overall SWE-Bench Verified lead at 80.8% — but only 0.2 percentage points above Gemini (80.6%). As the EvoLink analysis correctly notes, this difference is within the margin where test conditions matter more than model capability.

The Terminal-Bench 2.0 gap, however, is decisive: GPT-5.4 at 75.1% leads Opus 4.6 by nearly 10 points. This benchmark tests sustained terminal-heavy coding workflows — exactly the pattern used in OpenAI Codex, Cursor, and similar agentic coding environments.

Important caveat: SWE-bench scores from different vendors use different scaffolds and evaluation setups. Don't over-index on 0.2% differences between models.

For a practical comparison of these models in coding tools, see our Best AI Coding Tools 2026 comparison.

Agentic AI & Computer Use

Agentic AI benchmarks test whether models can autonomously navigate desktops, coordinate tools, browse the web, and complete multi-step workflows without human intervention. This is the most consequential new dimension in the March 2026 comparison.

GPT-5.4: The Computer Use Pioneer

GPT-5.4 is OpenAI's first universal model with native computer use capabilities. It can operate websites and software systems by writing code or executing mouse and keyboard commands in response to screenshots.

| Benchmark | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|

| OSWorld-Verified | 75.0% | 72.7% | — |

| WebArena-Verified | 67.3% | 66.4% | — |

| Toolathlon | 54.6% | 44.8% | — |

| MCP Atlas | 67.2% | — | 69.2% |

GPT-5.4's 75% OSWorld score is the headline result. This is the first frontier model to surpass human expert performance (72.4%) on autonomous desktop tasks — navigating operating systems, using applications, and completing multi-step workflows entirely through screen interaction.

Tool Search: A Game-Changer

An equally important innovation is tool search in the API. Instead of pre-loading all tool definitions into the prompt, GPT-5.4 can search for specific tools as needed — reducing token usage by 47% while maintaining accuracy. In tests with 250 tasks from the MCP Atlas benchmark, tool search proved that smarter tool selection can dramatically cut costs without sacrificing performance.

However, Gemini 3.1 Pro leads MCP Atlas at 69.2% vs GPT-5.4's 67.2% — a 2-point advantage on multi-tool orchestration that reflects Gemini's design focus on breadth over depth.

For teams building agentic workflows, the practical takeaway is clear: GPT-5.4 for autonomous desktop operations, Gemini for multi-tool orchestration at scale.

Pricing & Cost Analysis

Pricing varies 15× between the cheapest and most expensive options. Understanding the cost-performance tradeoffs is critical for production deployments.

Standard Tier Pricing

| Model | Input (per MTok) | Output (per MTok) | Context Window |

|---|---|---|---|

| Gemini 3.1 Pro | $2.00 | $12.00 | 2M tokens |

| GPT-5.4 | $2.50 | $15.00 | 1M (Codex) / 272K |

| Claude Opus 4.6 | $5.00 | $25.00 | 200K (1M beta) |

| GPT-5.4 Pro | $30.00 | $180.00 | 272K |

| GPT-5.2 | $1.75 | $14.00 | 400K |

| Sonnet 4.6 | $3.00 | $15.00 | 200K (1M beta) |

Cost-Performance Analysis

The cost-per-benchmark analysis reveals where each model delivers the best value:

- Best reasoning value: Gemini 3.1 Pro matches GPT-5.4 Pro's 94.3% GPQA Diamond at $2 vs $30 — a 15× cost reduction for equivalent reasoning performance.

- Best knowledge work value: GPT-5.4 at $2.50 offers the best value for GDPval and OSWorld, since no cheaper model matches these scores.

- Best coding value: Opus 4.6 at $5 is the cheapest path to 80.8% SWE-Bench Verified production coding. For budget-sensitive teams, GPT-5.2 at $1.75 delivers 80.0% SWE-Bench — very competitive at 65% lower cost.

Context Window Tradeoffs

Context window size creates additional tradeoffs:

- Gemini 3.1 Pro (2M tokens) — ideal for analyzing entire codebases or processing long documents in a single pass

- GPT-5.4 (1M in Codex, 272K standard) — requests over 272K tokens in Codex count at double rate

- Claude Opus 4.6 (200K standard, 1M beta) — prompts over 200K tokens use premium pricing ($10/$37.50 per MTok)

According to TrendingTopics, GPT-5.4 also offers batch and flex pricing at half the standard price, while priority processing is available at double the standard price. This makes GPT-5.4's effective floor price just $1.25 per MTok input for batch workloads.

Model Selection Guide

The decision framework is straightforward once you identify your primary workflow. Match your most common task type to the model that leads that category.

Choose GPT-5.4 When:

- Knowledge work is your primary output — reports, data analysis, spreadsheets, presentations

- You need autonomous computer use — desktop navigation, web automation, software testing

- You want the lowest hallucination rate among the three (33% fewer false statements vs GPT-5.2)

- Tool-heavy API workflows where tool search can reduce token costs by 47%

Choose Claude Opus 4.6 When:

- Production coding is your core workflow — bug fixing, code review, multi-file refactoring

- You need deep adaptive reasoning for complex debugging and edge-case analysis

- 128K max output matters — generating entire multi-file patches without truncation

- You want Claude Code integration for agentic terminal-based development

Choose Gemini 3.1 Pro When:

- Cost is a primary constraint — 60% cheaper than Opus per input token, 20% cheaper than GPT-5.4

- You need to process very long documents — 2M context processes 10× more than Opus's standard tier

- Abstract reasoning breadth matters more than coding precision (GPQA Diamond 94.3%)

- Multi-tool orchestration is your pattern (MCP Atlas leader at 69.2%)

The Multi-Model Strategy

Based on the research from DigitalApplied, the most practical approach for teams is a multi-model routing strategy:

- Route knowledge work and computer use tasks → GPT-5.4

- Route production coding and debugging → Claude Opus 4.6

- Route long-context analysis and budget-sensitive tasks → Gemini 3.1 Pro

Using an API gateway or model router, switching costs approach zero. This captures each model's strengths rather than forcing a single-model compromise.

What the Community Says

The developer community's reaction to GPT-5.4 has been nuanced. Based on discussions across Reddit, Hacker News, and developer forums, several themes emerge:

Benchmarks vs. Real-World Feel

The most consistent sentiment is skepticism toward benchmark-only comparisons. Many developers report that Claude "feels smarter" in practice despite GPT-5.4's benchmark leads. Users praise Claude for having a better "attitude" and producing less verbose output compared to GPT-5.4's more elaborate system prompts.

"Claude feels way smarter than the benchmarks suggest. GPT-5.4 might win on paper, but Claude's coding intuition is still unmatched." — Reddit user in r/ClaudeAI

Coding: Developers Still Loyal to Claude

Heavy coding users overwhelmingly prefer Claude Opus 4.6 for practical software engineering, despite GPT-5.4's strong Terminal-Bench numbers. Claude's "nuanced developments" and seamless integration with Claude Code make it the default choice for professional developers.

That said, several users note GPT-5.4 excels at "finding holes" — identifying edge cases, security vulnerabilities, and logical inconsistencies in existing code. The models appear to have genuinely different reasoning styles.

Price Advantage Is Real

One point where the community largely agrees: GPT-5.4 is significantly cheaper than Opus at approximately half the API cost ($2.50/$15 vs $5/$25). For teams running high-volume inference, this cost difference compounds quickly. At subscription level, both services cost $20/month, but users report more generous usage limits from OpenAI.

Overall Sentiment

The community is cautiously loyal to Claude while acknowledging GPT-5.4's technical leadership on specific benchmarks. The general feeling is that OpenAI may be optimizing for benchmark performance while Anthropic focuses on real-world developer utility. Both approaches have merit — and most power users are already running both.

Frequently Asked Questions

Is GPT-5.4 better than Claude Opus 4.6?

It depends on your use case. GPT-5.4 leads on knowledge work (GDPval 83%), computer use (OSWorld 75%), and terminal-based coding (Terminal-Bench 75.1%). Claude Opus 4.6 leads on production coding (SWE-Bench 80.8%), visual reasoning (MMMU Pro 85.1%), and web browsing (BrowseComp 84%). Neither is universally "better."

Which model is cheapest for production use?

Gemini 3.1 Pro at $2/$12 per MTok is the cheapest frontier model. GPT-5.4 follows at $2.50/$15, and Claude Opus 4.6 is the most expensive at $5/$25. For batch processing, GPT-5.4 drops to $1.25/$7.50 with batch pricing.

Can I use GPT-5.4 for coding in Codex?

Yes. OpenAI Codex now supports GPT-5.4 with an experimental 1M token context window. GPT-5.4 achieves Terminal-Bench 2.0 score of 75.1% and SWE-Bench Pro of 57.7%. The /fast mode offers up to 1.5× faster token speed.

What is the context window of Gemini 3.1 Pro?

Gemini 3.1 Pro offers a 2M token context window — the largest among the three models. This is 10× Opus's standard 200K and 2× GPT-5.4's 1M Codex mode. For prompts over 200K tokens, Gemini charges a higher tier of $4/$18 per MTok.

Is GPT-5.4 available now?

Yes. GPT-5.4 is available in ChatGPT for Plus, Team, and Pro users, and through the API. GPT-5.4 Pro ($30/$180 per MTok) offers enhanced reasoning for research-grade tasks.

Which model is best for coding?

Claude Opus 4.6 narrowly leads on SWE-Bench Verified (80.8% vs Gemini's 80.6%), but GPT-5.4 dominates Terminal-Bench 2.0 (75.1%) for sustained terminal-heavy coding. For best results, use Opus for code generation and review, and GPT-5.4 for terminal-based agentic coding in tools like Codex or Cursor.

Should I switch to GPT-5.4 from Claude right now?

Don't hard-switch immediately. Run GPT-5.4 side-by-side in your evaluation suite. The pragmatic approach: ship with your current model (Claude Opus 4.6 or Gemini 3.1 Pro), add GPT-5.4 to your eval pipeline, and migrate specific workloads where it demonstrably outperforms.

What about DeepSeek V4?

DeepSeek V4 is currently in early access and could disrupt the budget tier when fully released. It's worth monitoring but not yet a factor in production model selection for March 2026.

Conclusion

The March 2026 frontier model landscape is the most competitive it has ever been — and that's great news for developers.

GPT-5.4 represents OpenAI's most significant leap forward, particularly in applied professional work and autonomous computer control. The 83% GDPval score and 75% OSWorld performance establish new benchmarks for what AI can do in real-world professional settings.

Claude Opus 4.6 remains the developer's instinctive choice for coding, with the deepest adaptive reasoning and the tightest integration into developer workflows through Claude Code.

Gemini 3.1 Pro is the value play that refuses to be ignored — matching or exceeding the other two on abstract reasoning at a fraction of the cost, with a 2M context window that opens entirely new use-case categories.

The era of a single "best model" is over. Smart teams in 2026 are adopting multi-model routing strategies that leverage each model's strengths. The cost of switching between models through API gateways is near zero — the only real investment is in evaluation infrastructure.

Ready to explore these models? Check out our tool pages for ChatGPT (GPT-5.4), Claude Opus 4.6, and Google Gemini 3.1 Pro for detailed pricing, features, and getting-started guides.

Sources: DigitalApplied, EvoLink, TrendingTopics, Reddit r/ClaudeAI.

Last Updated: March 2026